SPECstorage™ Solution 2020_swbuild Result

Copyright © 2016-2023 Standard Performance Evaluation Corporation

|

SPECstorage™ Solution 2020_swbuild ResultCopyright © 2016-2023 Standard Performance Evaluation Corporation |

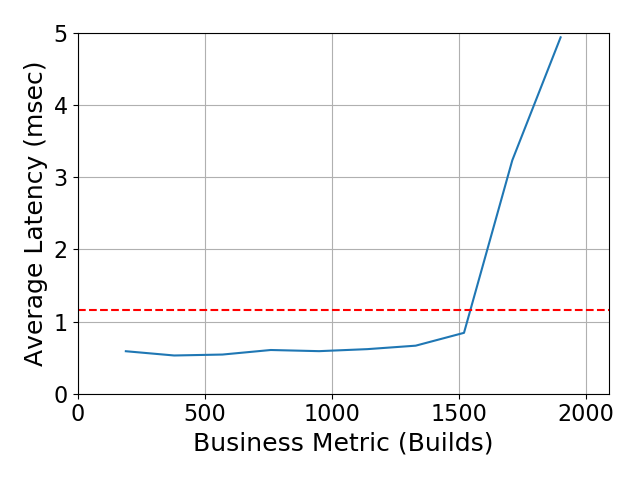

| NetApp Inc. | SPECstorage Solution 2020_swbuild = 1900 Builds |

|---|---|

| NetApp 2-node AFF A900 with FlexGroup | Overall Response Time = 1.16 msec |

|

|

| NetApp 2-node AFF A900 with FlexGroup | |

|---|---|

| Tested by | NetApp Inc. | Hardware Available | December 2021 | Software Available | November 2023 | Date Tested | September 2023 | License Number | 33 | Licensee Locations | Sunnyvale, CA USA |

Designed and built for customers seeking a storage solution for the high

demands of enterprise applications, the NetApp high-end flagship all-flash AFF

A900 delivers unrivaled performance, superior resilience, and best-in-class

data management across the hybrid cloud. With an end-to-end NVMe architecture

supporting the latest NVMe SSDs, and both NVMe/FC and NVMe/TCP network

protocols, It provides over 50% performance increase over its predecessor with

ultra-low latency. Powered by ONTAP data management software, it supports

non-disruptive scale-out to a cluster of 24 nodes.

ONTAP is designed

for massive scaling in a single namespace to over 20PB with over 400 billion

files while evenly spreading the performance across the cluster. This makes the

AFF A900 a great system for engineering and design applications as well as

DevOps. It is particularly well-suited for chip development and software builds

that are typically high file-count environments with high data and meta-data

traffic.

| Item No | Qty | Type | Vendor | Model/Name | Description |

|---|---|---|---|---|---|

| 1 | 1 | Storage System | NetApp | AFF A900 Flash System (HA Pair, Active-Active Dual Controller) | A single NetApp AFF A900 system is a chassis with 2 controllers. A set of 2 controllers comprises a High-Availability (HA) Pair. The words "controller" and "node" are used interchangeably in this document. One NS224 disk shelf is cabled to the AFF A900 controllers, with 24 SSDs per disk shelf. Each AFF A900 HA Pair includes 2048GB of ECC memory, 128GB of NVRAM, 20 PCIe expansion slots and a set of included I/O ports: * 4 x 40/100 GbE ports, in Slots numbered 4 and 8 in each controller, configured as 100 GbE, 1 port per card used for 100GbE data connectivity to clients. * 2 x 100 GbE ports, in Slot 2 of each controller, configured as 100GbE; each card has two paths cabled to the disk shelf. Included CoreBundle, Data Protection Bundle and Security and Compliance bundle which includes All Protocols, SnapRestore, SnapMirror, FlexClone, Autonomous Ransomware Protection, SnapCenter and SnapLock. Only the NFS protocol license is active in the test which is available in the Core Bundle. |

| 2 | 4 | Network Interface Card | NetApp | 2-Port 100GbE RoCE QSFP28 X91153A | 1 card in slot 4 and 1 card in slot 8 of each controller; 4 cards per HA pair; used for data and cluster connections. |

| 3 | 2 | Network Interface Card | NetApp | 2-Port 100GbE RoCE QSFP28 X91153A | 1 card in slot 2 of each controller; 2 cards per HA pair; directly attached to disk shelves (without use of a switch) |

| 4 | 1 | Disk Shelf | NetApp | NS224 (24-SSD Disk Shelf) | Disk shelf with capacity to hold up to 24 x 2.5" drives. 2 I/O modules per shelf, each with 2 ports for 100GbE controller connectivity. |

| 5 | 24 | Solid-State Drive | NetApp | 1.92TB NVMe SSD X4016A | NVMe Solid-State Drives (NVMe SSDs) installed in NS224 disk shelf, 24 per shelf |

| 6 | 2 | Network Interface Card | Mellanox Technologies | ConnectX-5 MCX516A-CCAT | 2-port 100 GbE NIC, one installed per client. lspci output: Mellanox Technologies MT27800 Family [ConnectX-5] |

| 7 | 1 | Switch | Cisco | Cisco Nexus 9336C-FX2 | Used for Ethernet data connections between clients and storage systems. Only the ports used for this test are listed in this report. See the 'Transport Configuration - Physical' section for connectivity details. |

| 8 | 1 | Switch | Cisco | Cisco Nexus 9336C-FX2 | Used for Ethernet connections of AFF A900 storage cluster network. Only the ports used for this test are listed in this report. See the 'Transport Configuration - Physical' section for connectivity details. |

| 9 | 2 | Fibre Channel Interface Card | Emulex | Quad Port 32 Gb FC X1135A | Located in Slot 3 of each controller; these cards were not used for this test. They were in place because this is a shared-infrastructure lab environment; no I/O was directed through these cards during this test. |

| 10 | 8 | Client | Lenovo | Lenovo ThinkSystem SR650 V2 | Lenovo ThinkSystem SR650 V2 clients. System Board machine type is 7Z73CTO1WW, PCIe Riser part number R2SH13N01D7. Each client also contains 2 Intel Xeon Gold 6330 CPU @ 2.00GHz with 28 cores, 8 DDR4 3200MHz 128GB DIMMs, 240GB M.2 SATA SSD part number SSS7A23276, and a 240G M.2 SATA SSD part number SSDSCKJB240G7. All 8 clients are used to generate the workload, 1 is also used as Prime Client. |

| Item No | Component | Type | Name and Version | Description |

|---|---|---|---|---|

| 1 | Linux | Operating System | RHEL 8.4 (Kernel 4.18.0-305.el8.x86_64) | Operating System (OS) for the 8 clients |

| 2 | ONTAP | Storage OS | R9.14.1xN_230918_0000 | Storage Operating System |

| 3 | Data Switch | Operating System | 9.3(3) | Cisco switch NX-OS (system software) |

| Storage | Parameter Name | Value | Description |

|---|---|---|

| MTU | 9000 | Jumbo Frames configured for data ports |

Data network was set up with MTU of 9000.

| Clients | Parameter Name | Value | Description |

|---|---|---|

| rsize,wsize | 65536 | NFS mount options for data block size |

| nconnect | 6 | NFS mount options for multiple TCP connections per mount |

| protocol | tcp | NFS mount options for protocol |

| nfsvers | 3 | NFS mount options for NFS version |

| nofile | 102400 | Maximum number of open files per user |

| nproc | 10240 | Maximum number of processes per user |

| sunrpc.tcp_slot_table_entries | 128 | sets the number of (TCP) RPC entries to pre-allocate for in-flight RPC requests |

| net.core.wmem_max | 16777216 | Maximum socket send buffer size |

| net.core.wmem_default | 1048576 | Default setting in bytes of the socket send buffer |

| net.core.rmem_max | 16777216 | Maximum socket receive buffer size |

| net.core.rmem_default | 1048576 | Default setting in bytes of the socket receive buffer |

| net.ipv4.tcp_rmem | 1048576 8388608 33554432 | Minimum, default and maximum size of the TCP receive buffer |

| net.ipv4.tcp_wmem | 1048576 8388608 33554432 | Minimum, default and maximum size of the TCP send buffer |

| net.core.optmem_max | 4194304 | Maximum ancillary buffer size allowed per socket |

| net.core.somaxconn | 65535 | Maximum tcp backlog an application can request |

| net.ipv4.tcp_mem | 4096 89600 8388608 | Maximum memory in 4096-byte pages across all TCP applications. Contains minimum, pressure and maximum. |

| net.ipv4.tcp_window_scaling | 1 | Enables TCP window scaling |

| net.ipv4.tcp_timestamps | 0 | Turn off timestamps to reduce performance spikes related to timestamp generation |

| net.ipv4.tcp_no_metrics_save | 1 | Prevent TCP from caching connection metrics on closing connections |

| net.ipv4.route.flush | 1 | Flush the routing cache |

| net.ipv4.tcp_low_latency | 1 | Allows TCP to make decisions to prefer lower latency instead of maximizing network throughput |

| net.ipv4.ip_local_port_range | 1024 65000 | Defines the local port range that is used by TCP and UDP traffic to choose the local port. |

| net.ipv4.tcp_slow_start_after_idle | 0 | Congestion window will not be timed out after an idle period |

| net.core.netdev_max_backlog | 300000 | Sets maximum number of packets, queued on the input side, when the interface receives packets faster than kernel can process |

| net.ipv4.tcp_sack | 0 | Disable TCP selective acknowledgements |

| net.ipv4.tcp_dsack | 0 | Disable duplicate SACKs |

| net.ipv4.tcp_fack | 0 | Disable forward acknowledgement |

| vm.dirty_expire_centisecs | 30000 | Defines when dirty data is old enough to be eligible for writeout by the kernel flusher threads. Unit is 100ths of a second. |

| vm.dirty_writeback_centisecs | 30000 | Defines a time interval between periodic wake-ups of the kernel threads responsible for writing dirty data to hard-disk. |

| vm.swappiness | 0 | A tunable kernel parameter that controls how much the kernel favors swap over RAM. |

| vm.vfs_cache_pressure | 0 | Controls the tendency of the kernel to reclaim the memory which is used for caching of directory and inode objects. |

Tuned the necessary client parameters as shown above, for communication between

clients and storage controllers over Ethernet, to optimize data transfer and

minimize overhead.

The second M.2 SSD in each client was configured as

a dedicated swap space of 224GB.

None

| Item No | Description | Data Protection | Stable Storage | Qty |

|---|---|---|---|---|

| 1 | 1.92TB NVMe SSDs used for data and storage operating system; used to build three RAID-DP RAID groups per storage controller node in the cluster | RAID-DP | Yes | 24 |

| 2 | 1.92TB NVMe M.2 device, 1 per controller; used as boot media | none | Yes | 2 |

| Number of Filesystems | 1 | Total Capacity | 30TB | Filesystem Type | NetApp FlexGroup |

|---|

The single FlexGroup consumed all data volumes from all of the aggregates across all of the nodes.

The storage configuration consisted of 1 AFF A900 HA pair (2 controller nodes

total). The two controllers in a HA pair are connected in a SFO (storage

failover) configuration. Together, all 2 controllers (configured as an HA pair)

comprise the tested AFF A900 HA cluster. Stated in the reverse, the tested AFF

A900 HA cluster consists of 1 HA Pair, each of which consists of 2 controllers

(also referred to as nodes).

Each storage controller was connected to

its own and partner's NVMe drives in a multi-path HA configuration.

All

NVMe SSDs were in active use during the test (aside from 1 spare SSD per

shelf). In addition to the factory configured RAID Group housing its root

aggregate, each storage controller was configured with two 21+2 RAID-DP RAID

Groups. There was 1 data aggregate on each node, each of which consumed one of

the node's two 21+2 RAID-DP RAID Groups. This is (21+2 RAID-DP + 1 spare per

shelf) x 1 shelves = 24 SSDs total. 16x volumes, holding benchmark data, were

created within each aggregate. "Root aggregates" hold ONTAP operating system

related files. Note that spare (unused) drive partitions are not included in

the "storage and filesystems" table because they held no data during the

benchmark execution.

A storage virtual machine or "SVM" was created on

the cluster, spanning all storage controller nodes. Within the SVM, a single

FlexGroup volume was created using the one data aggregate on each controller. A

FlexGroup volume is a scale-out NAS single-namespace container that provides

high performance along with automatic load distribution and scalability.

| Item No | Transport Type | Number of Ports Used | Notes |

|---|---|---|---|

| 1 | 100GbE | 12 | For the client-to-storage network, the AFF A900 Cluster used a total of 4x 100 GbE connections from storage to the switch, communicating via NFSv3 over TCP/IP to 8 clients, via 1x 100GbE connection to the switch for each client. MTU=9000 was used for data switch ports. |

| 2 | 100GbE | 4 | The Cluster Interconnect network is connected via 100 GbE to a Cisco 9336C-FX2 switch, with 4 connections to each HA pair.. |

Each AFF A900 HA Pair used 4x 100 GbE ports for data transport connectivity to clients (through a Cisco 9336C-FX2 switch), Item 1 above. Each of the clients driving workload used 1x 100GbE ports for data transport. All ports on the Item 1 network utilized MTU=9000. The Cluster Interconnect network, Item 2 above, also utilized MTU=9000. All interfaces associated with dataflow are visible to all other interfaces associated with dataflow.

| Item No | Switch Name | Switch Type | Total Port Count | Used Port Count | Notes |

|---|---|---|---|---|---|

| 1 | Cisco Nexus 9336C-FX2 | 100GbE | 36 | 12 | 8 client-side 100 GbE data connections, 1 port per client; 4 storage-side 100 GbE data connections, 2 per A900 node. Only the ports on the Cisco Nexus 9336C-FX2 used for the solution under test are included in the total port count. |

| 2 | Cisco Nexus 9336C-FX2 | 100GbE | 36 | 4 | 2 ports per A900 node, for Cluster Interconnect. |

| Item No | Qty | Type | Location | Description | Processing Function |

|---|---|---|---|---|---|

| 1 | 4 | CPU | Storage Controller | 2.20 GHz Intel Xeon Platinum 8352Y | NFS, TCP/IP, RAID and Storage Controller functions |

| 2 | 16 | CPU | Client | 2.00 GHz Intel Xeon Gold 6330 | NFS Client, Linux OS |

Each of the 2 NetApp AFF A900 Storage Controllers contains 2 Intel Xeon Platinum 8352Y processors with 32 cores each; 2.20 GHz, hyperthreading disabled. Each client contains 2 Intel Xeon Gold 6330 processors with 28 cores at 2.00 GHz, hyperthreading enabled.

| Description | Size in GiB | Number of Instances | Nonvolatile | Total GiB |

|---|---|---|---|---|

| Main Memory for NetApp AFF A900 HA Pair | 2048 | 1 | V | 2048 |

| NVDIMM (NVRAM) Memory for NetApp AFF A900 HA pair | 128 | 1 | NV | 128 |

| Memory for each of 8 clients | 1024 | 8 | V | 8192 | Grand Total Memory Gibibytes | 10368 |

Each storage controller has main memory that is used for the operating system and caching filesystem data. Each controller also has NVRAM; See "Stable Storage" for more information.

The AFF A900 utilizes non-volatile battery-backed memory (NVRAM) for write caching. When a file-modifying operation is processed by the filesystem (WAFL) it is written to system memory and journaled into a non-volatile memory region backed by the NVRAM. This memory region is often referred to as the WAFL NVLog (non-volatile log). The NVLog is mirrored between nodes in an HA pair and protects the filesystem from any SPOF (single-point-of-failure) until the data is de-staged to disk via a WAFL consistency point (CP). In the event of an abrupt failure, data which was committed to the NVLog but has not yet reached its final destination (disk) is read back from the NVLog and subsequently written to disk via a CP.

All clients accessed the FlexGroup from all the available network

interfaces.

Unlike a general-purpose operating system, ONTAP does not

provide mechanisms for non-administrative users to run third-party code. Due to

this behavior, ONTAP is not affected by either the Spectre or Meltdown

vulnerabilities. The same is true of all ONTAP variants including both ONTAP

running on FAS/AFF hardware as well as virtualized ONTAP products such as ONTAP

Select and ONTAP Cloud. In addition, FAS/AFF BIOS firmware does not provide a

mechanism to run arbitrary code and thus is not susceptible to either the

Spectre or Meltdown attacks. More information is available from

https://security.netapp.com/advisory/ntap-20180104-0001/.

None of the

components used to perform the test were patched with Spectre or Meltdown

patches (CVE-2017-5754,CVE-2017-5753,CVE-2017-5715).

ONTAP Storage Efficiency techniques including inline compression and inline deduplication were enabled by default, and were active during this test. Standard data protection features, including background RAID and media error scrubbing, software validated RAID checksum, and double disk failure protection via double parity RAID (RAID-DP) were enabled during the test.

Please reference the configuration diagram. 8 clients were used to generate the workload; 1 of the clients also acted as Prime Client to control the 8 workload clients. Each client used one 100 GbE connection, through a Cisco Nexus 9336C-FX2 switch. Each storage HA pair had 4x 100 GbE connections to the data switch. The filesystem consisted of one ONTAP FlexGroup. The clients mounted the FlexGroup volume as an NFSv3 filesystem. The ONTAP cluster provided access to the FlexGroup volume on every 100 GbE port connected to the data switch (4 ports total). Each of the 2 cluster nodes had 1 Logical Interfaces (LIFs) per 100GbE Port, for a total of 2 LIFs per node, for a total of 4 LIFs for the AFF A900 cluster. Each client created mount points across those 4 LIFs symmetrically.

None

NetApp is a registered trademark and "Data ONTAP", "FlexGroup", and "WAFL" are trademarks of NetApp, Inc. in the United States and other countries. All other trademarks belong to their respective owners and should be treated as such.

Generated on Tue Oct 10 13:27:17 2023 by SpecReport

Copyright © 2016-2023 Standard Performance Evaluation Corporation